Research project by Abhay Nagaraj

Molecular modeling of electrochemical processes is notoriously difficult due to the complexitiy of the electrode-electrolyte interface and the non-equilibrium chemical and diffusive processes taking place under working conditions. Enhancing molecular simulation with machine learning techniques makes realistic modeling of these processes feasible.

Read More ›

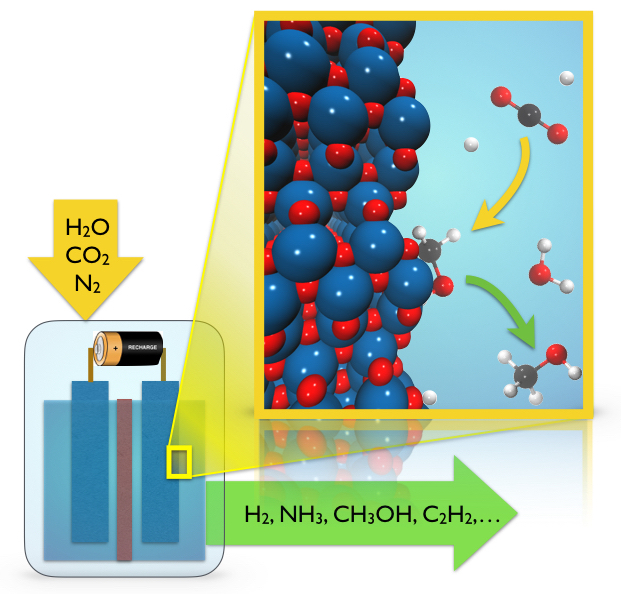

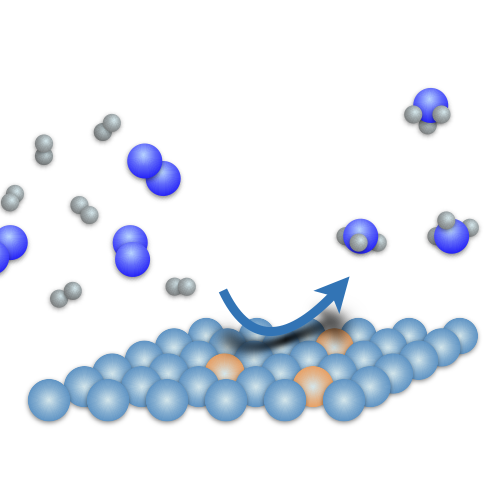

Research project by Matilde Mercuri

We are developing a workflow for generating high-quality quantum chemical data on nitrogen-fixing coordination complexes, combining DFT calculations, MLPs and enhanced sampling to characterize their electronic and thermodynamic properties. The resulting dataset can enable the development of machine learning models for catalyst discovery without the need for exhaustive simulations of reaction pathways across diverse molecular scaffolds.

Read More ›

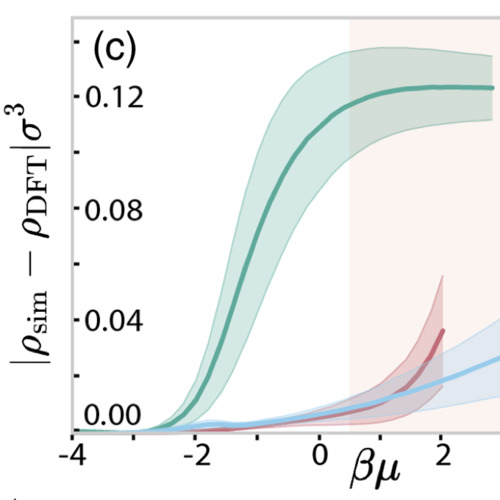

Research project by Jacobus Dijkman

We introduce a method for learning a neural-network approximation of the Helmholtz free-energy functional by exclusively training on a dataset of radial distribution functions, circumventing the need to sample costly heterogeneous density profiles in a wide variety of external potentials.

Read More ›

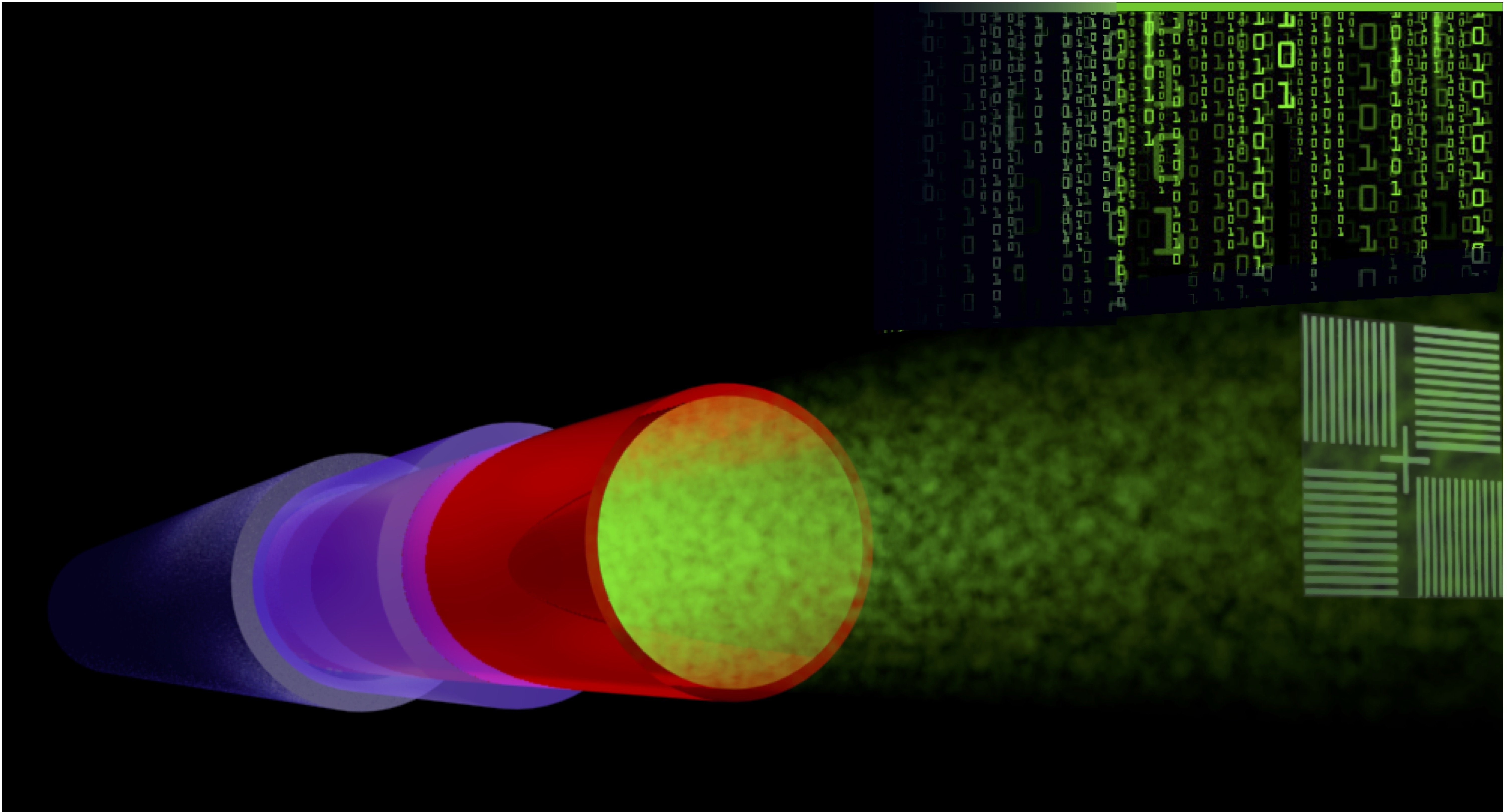

Research project by Maximilian Lipp

We explore deep learning and Bayesian optimization techniques to push the boundaries of compact semiconductor metrology tools. Label-free optical imaging methods with a spatial resolution beyond the Abbe diffraction limit and a temporal resolution beyond the Nyquist limit are being developed to characterize multi-layer nanostructures.

Read More ›

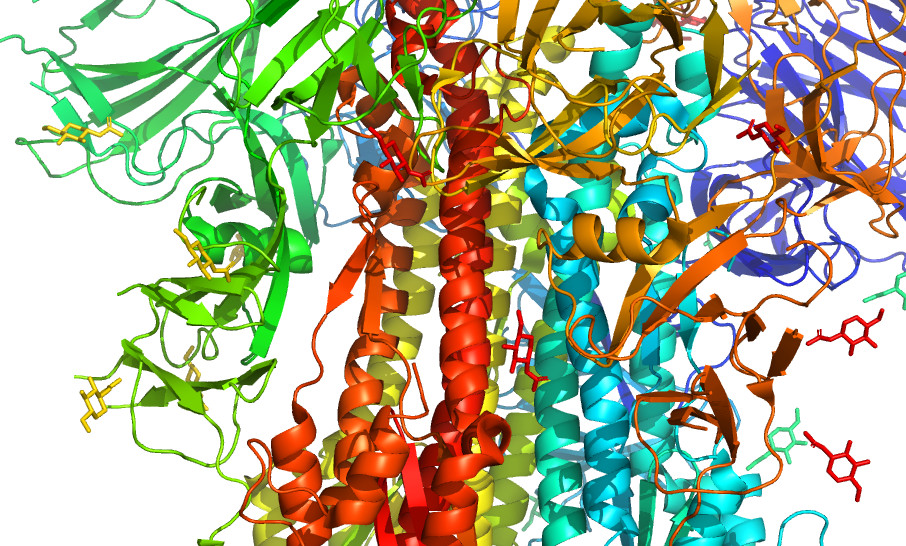

Research project by Cong Liu

The stability of the antigen is crucial for the development of effective vaccines against highly contagious viruses. By training deep-learning models to suggest mutations and predict protein stability, we aim to accelerate vaccine research and design.

Read More ›

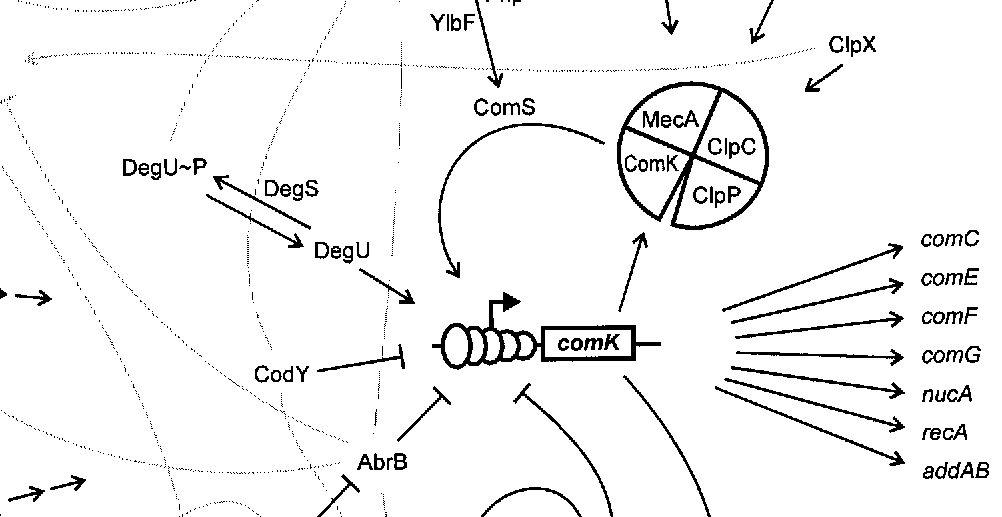

Research project by Teodora Pandeva

Unraveling the co-expression of genes across studies enhances the understanding of cellular processes. Inferring gene co-expression networks from transcriptome data presents many challenges, including spurious gene correlations, sample correlations, and batch effects. To address these complexities, we introduce a robust method for high-dimensional graph inference from multiple independent studies.

Read More ›

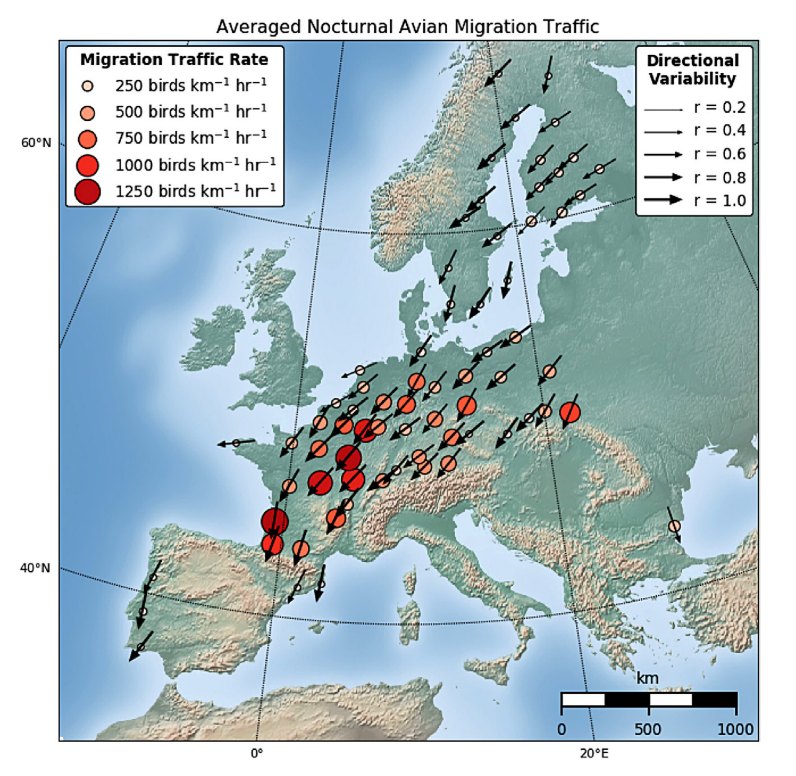

Research project by Fiona Lippert

Weather radar networks provide wide-ranging opportunities for ecologists to quantify and predict movements of airborne organisms over unprecedented geographical expanses. We propose FluxRGNN, a recurrent graph neural network that is based on a generic mechanistic description of population-level movements across the Voronoi tessellation of radar sites.

Read More ›

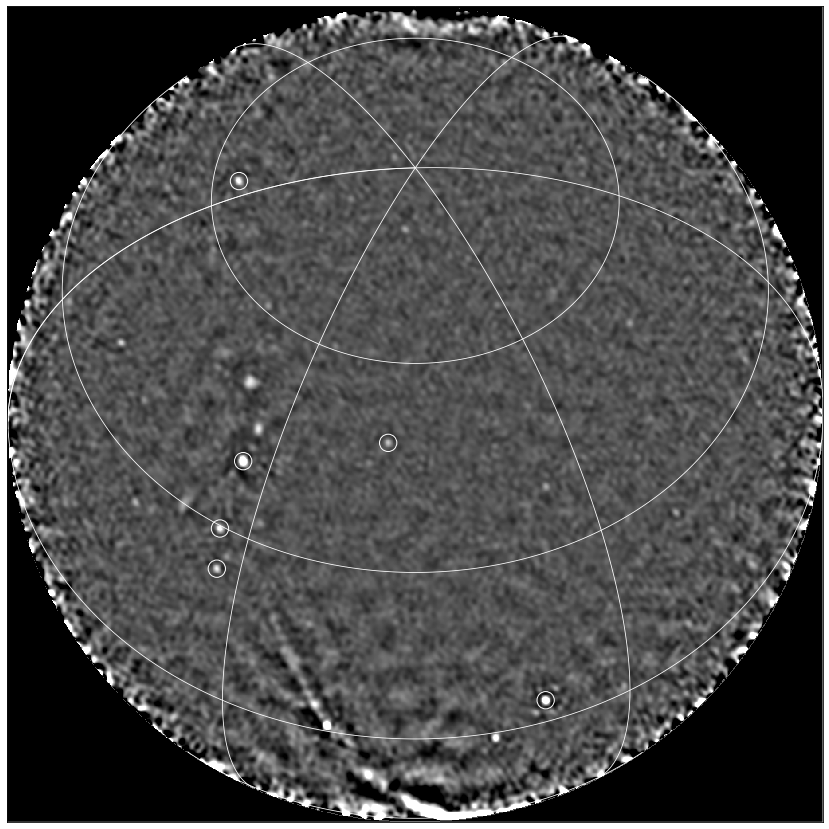

Research project by David Ruhe

We present a methodology for automated real-time analysis of a radio image data stream with the goal to find transient sources. Contrary to previous works, the transients we are interested in occur on a time-scale where dispersion starts to play a role, so we must search a higher-dimensional data space and yet work fast enough to keep up with the data stream in real time.

Read More ›

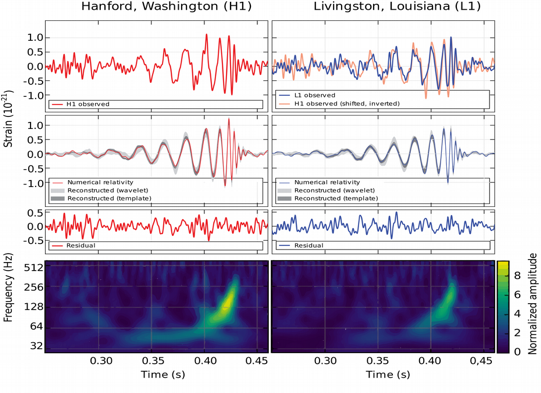

Research project by Benjamin Miller

Peregrine is a new sequential simulation-based inference approach designed to study broad classes of gravitational wave signal. We show that we are able to fully reconstruct the posterior distributions for every parameter of a spinning, precessing compact binary coalescence using one of the most physically detailed and computationally expensive waveform approximants (SEOBNRv4PHM).

Read More ›

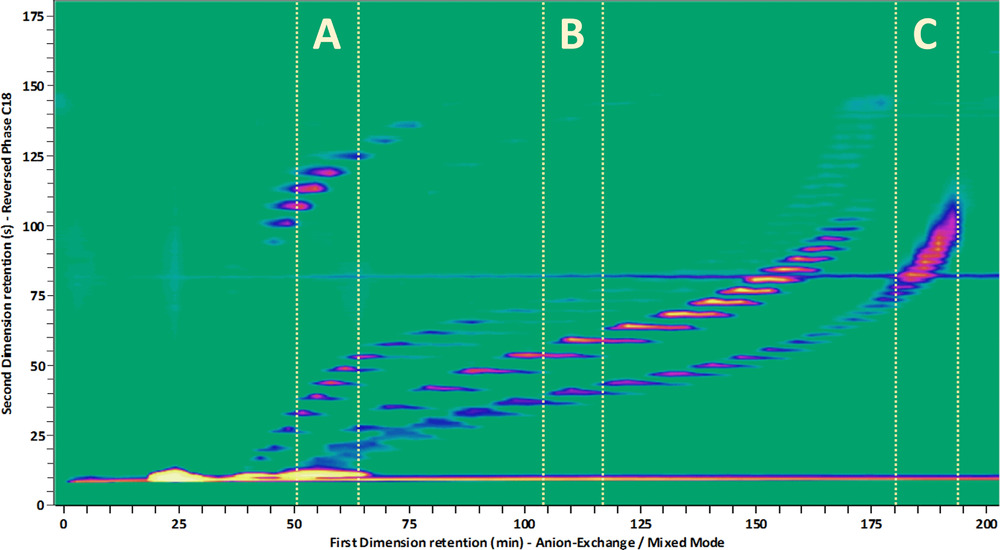

Research project by Jim Boelrijk

A Bayesian optimization algorithm is developed for automated and unsupervised development of gradient programs. The algorithm was tailored to liquid chromatography using a Gaussian process model with a novel covariance kernel. The algorithm can operate in both the single- and multi-objective setting.

Read More ›